The Missing Data Layer for Physical AI

Forklift-Simulator is not just a training tool—it is the world’s leading standardized platform to harvest human skills to develop world models and physical AI.

We provide the foundational dataset for industrial embodied AI. We capture aligned intent, action, and outcome trajectories from human operators in physics-grounded environments

AI has learned from text and video.

The next bottleneck is learning from action.

The world-model bottleneck is not compute — it is grounded action data

Every warehouse robot deployed today was trained without knowing why a human made the decisions it is trying to imitate. AI is moving from prediction to decision-making, but synthetic data alone misses the critical edge cases and the nuanced texture of real human decision-making. Industrial AI fails when it doesn’t understand how humans actually operate under safety and workflow constraints.

A 10-Year Head Start on Real-World Ground Truth

We aren’t just simulating data;

we are harvesting human mastery.

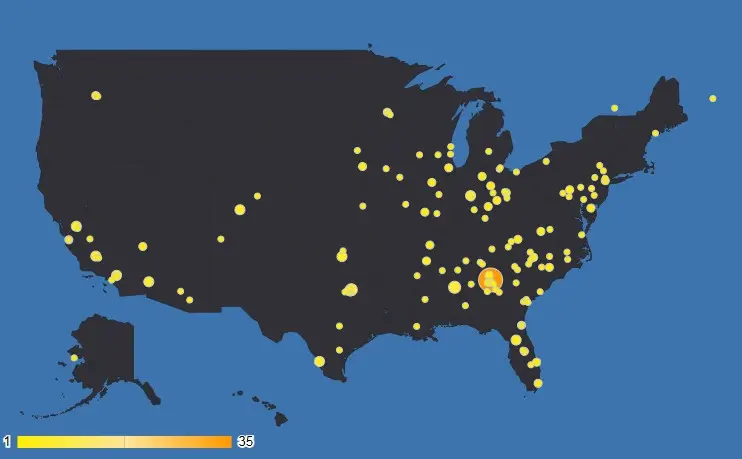

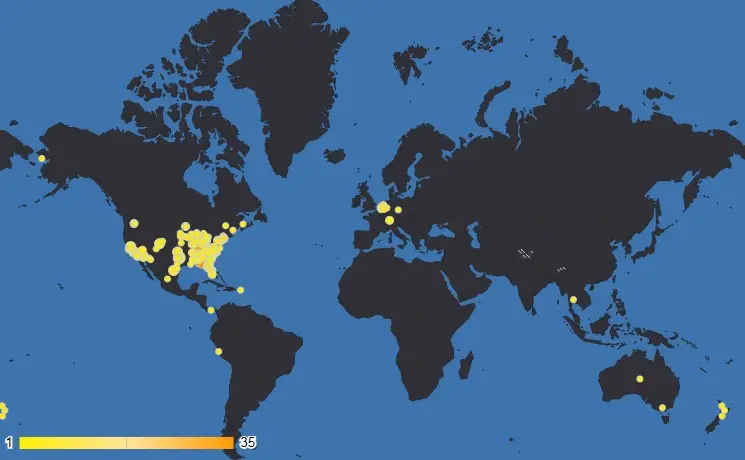

Over the past decade, we have built a proprietary data platform deployed across more than 500 simulators for ~300 customers in 17 countries. This scale allows us to collect high-quality, multimodal behavioral data on real OEM hardware, capturing the operational reality of logistics environments.

“Every Action is captured with its intent and its consequence for step-level causality.”

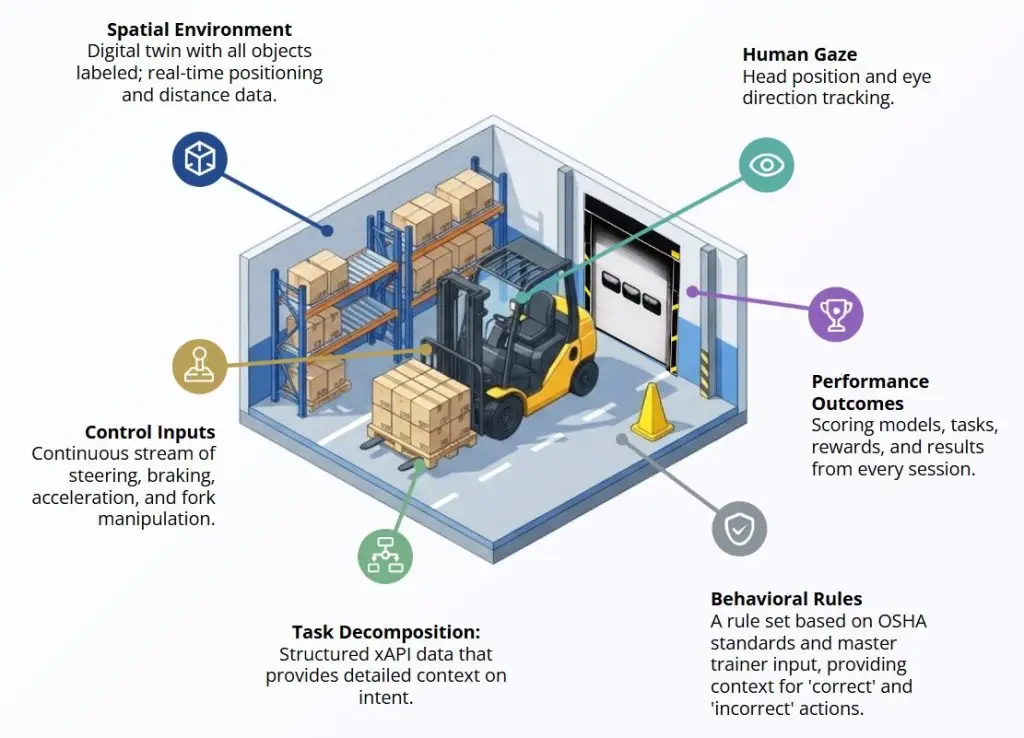

The Intent Layer: Causality, Action, and Outcome

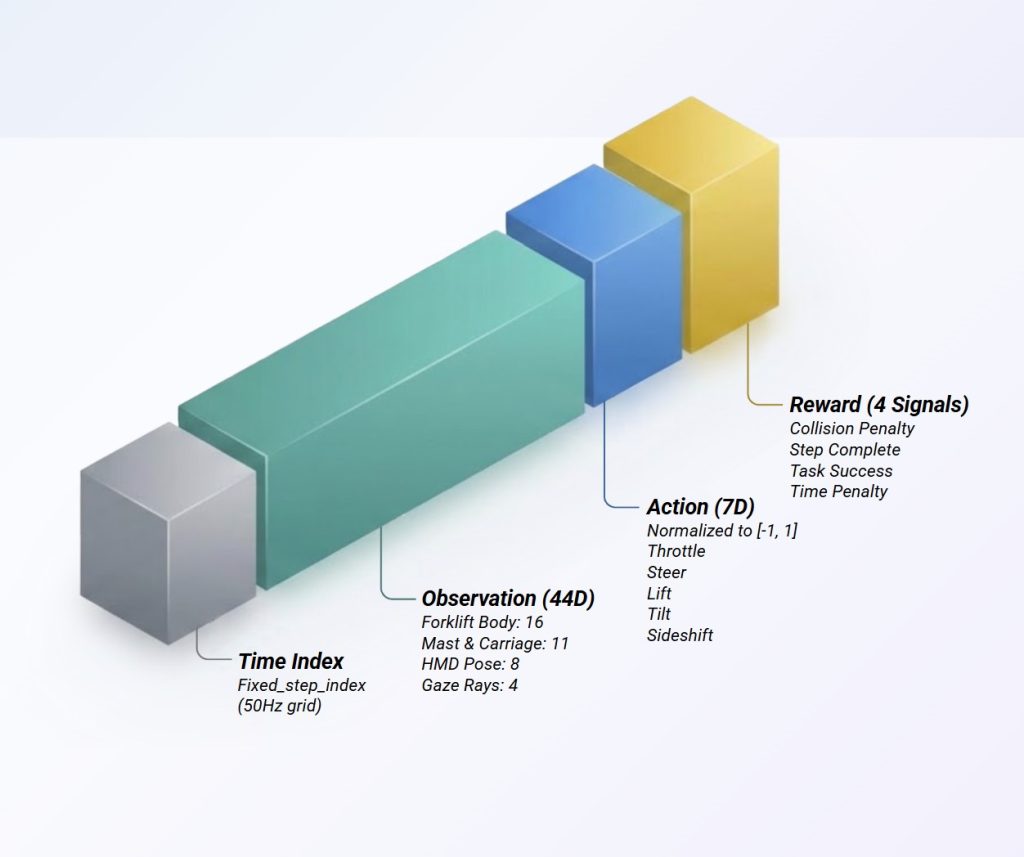

Most datasets show behavior — we make the reward function and intent explicit. Our data is instrumented at every layer and locked to a single, deterministic clock.

- Action (Telemetry):

High-fidelity 50Hz stream of continuous control inputs, vehicle kinematics, and environmental interactions - Outcome (Ground Truth):

Recorded results of success, failure, collisions, and safety violations based on OSHA standard - Intent (xAPI):

Capturing the “why” by recording explicit task objectives, gaze rays, anticipatory head tracking, and step-level curriculum context

ML-Ready Format for Policy Learning

The first publicly available action dataset for warehouse physical AI that captures operator intent at the task level. Distributed as JSONL and a pre-built trajectory.parquet aligned to a deterministic 50 Hz physics grid.

- Scale:

Over 180,000 expert operator episodes - Edge Cases:

~600,000 registered mistakes, safety violations, and edge cases too dangerous to collect in the real world - 44D Observation Vector:

Including vehicle body state, mast/carriage pose, operator HMD pose, and gaze rays - 7D Action Vector:

Normalized throttle, steer, lift, tilt, and sideshift inputs - 4 Built-in Reward Signals:

Explicit values for collision penalties, step completion, task success, and time efficiency

Frontier AI & World Models

World models need causally grounded experience. Use our explicit task structure, intent labels, and reward signals for goal-conditioned agents, RLHF reward modeling, and grounding world models in human behavior.

Industrial Robotics & Automation

Overcome the sim-to-real gap. Reduce deployment risk by giving your Autonomous Mobile Robots (AMRs) the behavioral priors and OSHA-compliant safety context they need to navigate complex, social warehouse environments natively.

RL: A Structured Human Action & Intent Dataset for Physical AI and World Models

Partner to structure, comment on and publish data sets.

Open up environment as a gym for a model contest within community.

Thought partner on use of data for general AI development.

Unlocking the Power of Immersive Learning: The FAIRI Instructional Design Proposition

4Y+ close research partnership with new publications on indicators upcoming

Ongoing research on Rewards, RL agents,and synthetic data modeling

World class xAPI contributors